For about the last month, I’ve been Ethereum mining, putting a GTX 1060 (“Rack 2U GPU Compute Node“) and 1070 (“Mira“), and an R9 290X (from “Absinthe“) at this. In part to see what the hardware can do. And I’ve been rather impressed with the R9’s performance that I decided to build another standalone node for Ethereum mining using another AMD graphics card.

And the graphics card is the only new contribution to this, the only hardware I didn’t have previously. Everything else was hardware I already had on hand.

New graphics card

That is the Sapphire Nitro+ RX 580. Specifically I picked up the 4GB model. My only slight concern was power consumption.

I managed to find the Nitro+ card as an open box at Micro Center for a very nice markdown. If not for the markdown, however, I would not have gone with this as it’s one of the more expensive RX 580s on the market. I would’ve instead looked for a lesser RX 580, or possibly looked for an RX 570 instead.

Initial form

- Chassis: PlinkUSA IPC-G4380S 4U

- CPU and cooling: AMD FX-8350 with Noctua NH-D9L

- Mainboard: Gigabyte 990FXA-UD3

- RAM: 8GB DDR3-1333

- Power supply: Corsair AX860

- OS: Ubuntu Server 16.04.3 LTS

In my 10 Gigabit Ethernet series, I mentioned building a custom switch from the above hardware. And after disassembling that switch to replace it with an off-the-shelf 10GbE SFP+ switch, I never reprovisioned that hardware for anything else, despite planning to do so.

So assembling the system was pretty straightforward: drop the new graphics card in, plug it up, find something to act as primary storage, and go.

One thing that was frustratingly strange: Ubuntu did NOT want to play nice with the onboard Gigabit NIC, or with a separate TP-Link NIC I attempted to use. I was able to get the networking functional with another HP 4-port Gigabit NIC. Fedora 26, however, had no issues playing nice with the onboard NIC.

But this was only a temporary build. I had no intention of leaving this card plugged into the FX-8350. Again the initial build was in this merely because it was mostly prepared. And I initially couldn’t find the mainboard and processor I wanted to use to drive this mining machine. I did find it later that day, so I decided to let the system run and I’d swap things out the next day.

Intermediate form

- CPU and cooling: AMD Athlon 64 X2 4200+ with Noctua NH-D9L

- Mainboard: MSI KN9 Ultra SLI-F

- RAM: 2GB DDR2-800

Wait, a 12 year-old platform driving a modern graphics card? I know what you might be thinking: bottleneck! Except…. no.

While this combination certainly is not powerful enough to drive this card for gaming, it’s more than enough for computational tasks such as BOINC, Folding@Home (though you’d really want a better CPU), and Ethereum mining. The aforementioned R9 290X is running on an Athlon 64 X2 3800+ system without a problem. It overtook my GTX 1060 on reported shares, despite the 1060 having a several day head start, and is reporting a better hash rate than my GTX 1070.

So… yeah. As I’ve pointed out before, most who scream “bottleneck” are those who have no clue how all of this stuff works together.

The slow lane

That being said, though, the RX580’s performance out of the box left much to be desired: 18MH/s out of the box. When others are reporting mid 20s to near 30? What gives? I mean the R9 290X was outperforming it. These are the hash rates reported to the pool by ethminer:

- GTX 1070 8GB: 25.4MH/s

- RX 580 4GB: 18.4MH/s

- R9 290X 4GB: 24.8MH/s

- GTX 1060 3GB: 19.9MH/s

I thought system memory was holding back the mining software, so I swapped 4GB into the system. No difference. Had a slight improvement swapping in Claymore’s Dual-Miner for the original ethminer, but still wasn’t anywhere near what I thought I should’ve been seeing.

The GTX 1060 rig had been offline for a few days, so I moved the mainboard (Athlon X4 860k) from that into the 4U chassis and installed the RX 580 into that, then swapped out Ubuntu for Windows 10. Pull down the Claymore miner for Windows, and attempt to run it… No significant difference in hash rate.

Which rules out the platform as the culprit. And I didn’t have any reason to think it would be. Instead the platform change was due to my concern the Linux driver may have been holding it back, thinking there would be a performance boost by running the Windows driver. And out of the box there wasn’t.

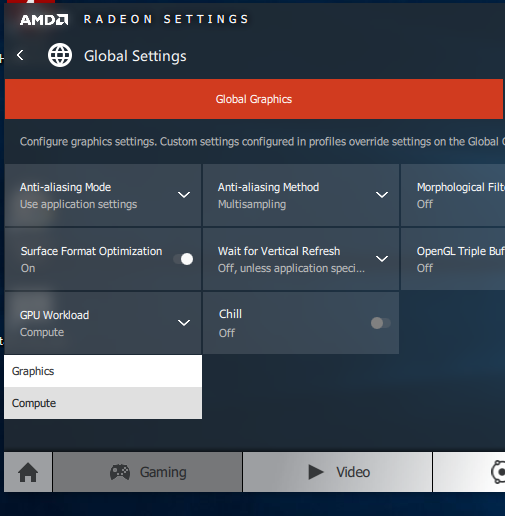

But setting the “GPU Workload” setting to “Compute” instead of “Gaming” boosted the hash rate to about 22.5MH/s. Time to overclock.

One thing I didn’t realize ahead of this: the 4GB RX cards have their memory clocked at 1750MHz, while the 8GB RX cards have memory clocked at 2000MHz. Which definitely explains the lackluster performance I had out of the box — and likely why it was returned to Micro Center.

And in reading about how to get better mining performance, everything I read said to focus on memory, not core speed.

So with MSI Afterburner, I first turned up the fan to drop the core temperature. Then I started bumping the memory with the miner running in the background to provide an instant stability and performance check. I was able to push it to 2050MHz. At 2100MHz, the system locked up. I was not interested in dialing it in any further, as any improvements would’ve been within margin of error.

Final verdict: ~26MH/s. About on par with my GTX 1070. And almost 45% increase in performance by setting the driver to Compute and overclocking the memory.

The fact I was able to get 24.4MH/s out of the R9 290X, running the AMD driver on Ubuntu Server with no additional configuration (since I don’t know if you can configure it further), shows I must have a really, really good R9 290X, since it was only 10% lower than my GTX 1070 on reported hash rates from the mining software.

Until I swapped out ethminer for Claymore’s miner. Then it overtook my GTX 1070 on hash rate.

Other systems

I mentioned before that this isn’t the only mining rig I have set up currently. Along with Mira, I have two other systems, one with the aforementioned R9 290X and GTX 1060, both running Ubuntu Server 16.04.3 LTS and using Claymore’s miner.

First system:

- CPU and cooling: AMD Athlon 64 X2 3800+ with Noctua NH-L9a

- RAM: 4GB DDR2-800

- Mainboard: Abit KN9 Ultra

- Graphics card: XFX “Double-D” R9 290X 4GB (water cooled)

- Hash rate: 27.5 MH/s

Right now this system is sitting in an open-style setup. I’m working to move it, and the water cooling setup, into another 4U chassis. I just need to, first, find a DDC pump or possibly use an aquarium pump inside a custom reservoir.

Second system:

- CPU and cooling: AMD Athlon 64 X2 4200+ with Noctua NH-L9a

- RAM: 4GB DDR2-800

- Mainboard: MSI K9N4 Ultra-SLI

- Graphics card: Zotac GTX 1060 3GB

- Hash rate: 19.8 MH/s

Not much to write home about at the moment. I’m considering swapping this into one of the 990FX platforms to use Windows 10 so I can overclock it and see if I can get any additional performance from it. Since it’s a hell of a lot easier to overclock a graphics card on Windows.

The GTX 1060 also is not water cooled, but that might change as well, though using an AIO and not a custom loop. Which is a reason to swap it into a 4U chassis and out of the 2U chassis that currently houses it. But I’d also need another rack to hold that.

Recommendations

There really are only three graphics cards to recommend out of what’s on the market, in my opinion: the GTX 1060, RX 570, or RX 580. As demonstrated, the RX 580 is the better performer, but also has higher power requirements compared to the GTX 1060.

So if you pick up a GTX 1060, you can save a little money and get the 3GB model and still have a decent hashrate. You can even try overclocking it on Windows if you desire. The 6GB model may give you better performance (since it has more CUDA cores), but it’s up to you as to whether you feel it’s worth the extra cost. The mini versions work well, but the full-length cards will provide better cooling on the core.

For AMD RX cards, run those on a Windows system with the driver set to “Compute”. You can also save a little money and grab the 4GB version. Just make sure to overclock the memory.

* * * * *

If you found this article informative, consider dropping me a donation via PayPal or ETH.

You must be logged in to post a comment.