- Putting older hardware to work

- Colony West Project

- Adding the GT 620

- Follow-up on ASRock BTC Pro and other options

- More proof of concept

- Adding a GTX 680 and water cooling

- Finalizing the graphics host

- ASRock BTC Pro Kit

- No cabinet, yet…

- Finally a cabinet!… frame

- Rack water cooling

- Rack water cooling, cont.

- Follow-up on Colony West

- Revisiting bottlenecking, or why most don’t use the term correctly

- Revisiting the colony

- Running Folding@Home headless on Fedora Server

- Rack 2U GPU compute node

- Volunteer distributed computing is important

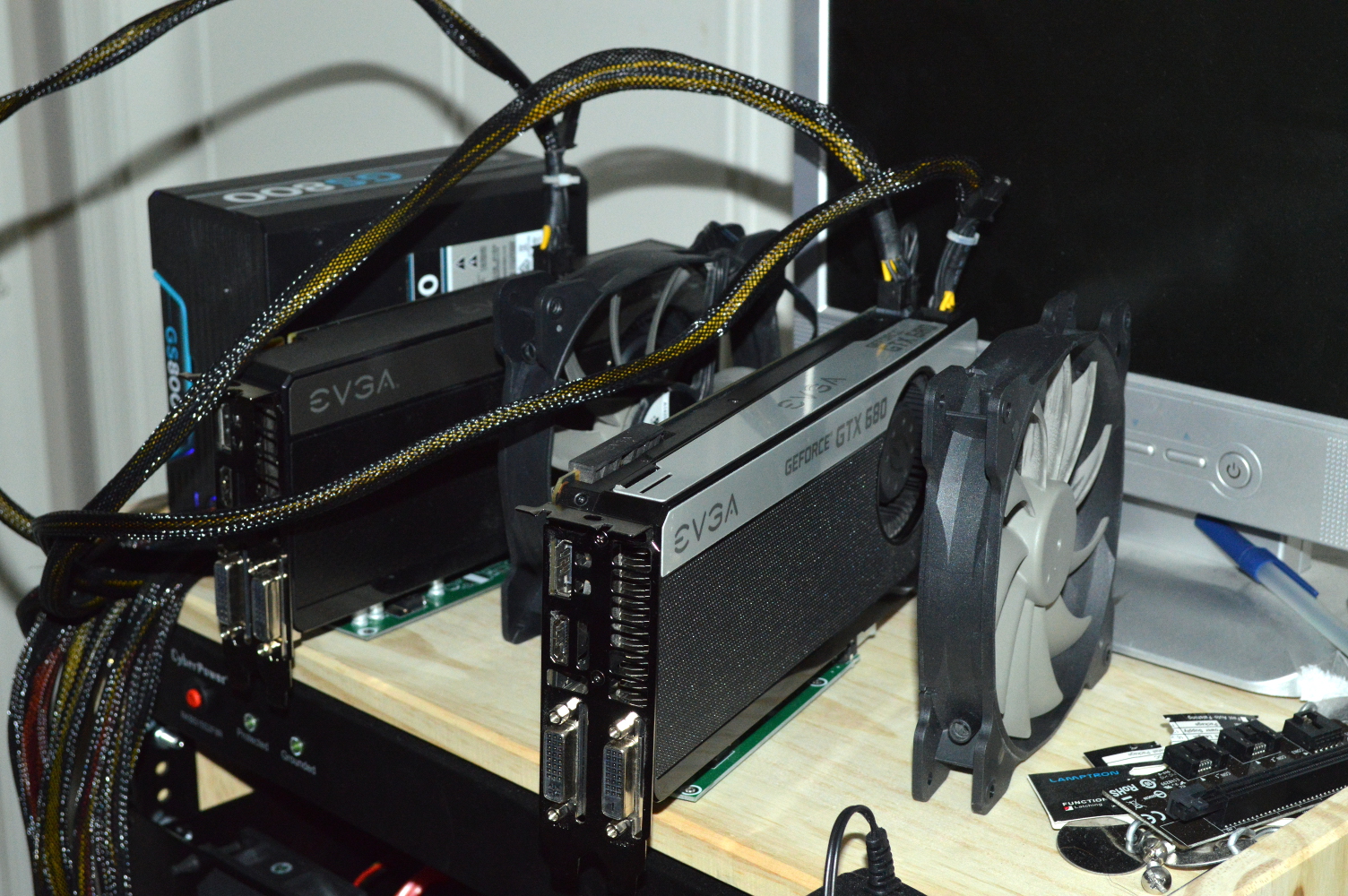

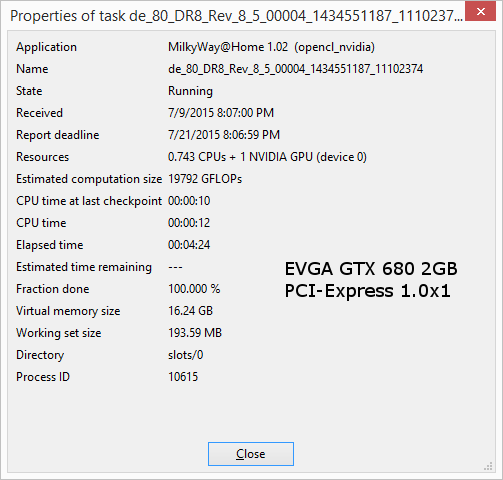

Time to finalize the X2 system and also do a little experimenting. I ordered a GTX 680 used through eBay as I wanted to test how well it’d work through the PCI-Express extender connected to a PCI-Express 1.0 x1 slot. It replaced the GT 620 for this testing. I mentioned in the previous article that I intended to buy a pair of GTX 680s to make use of the Koolance GTX 680 water blocks I still have.

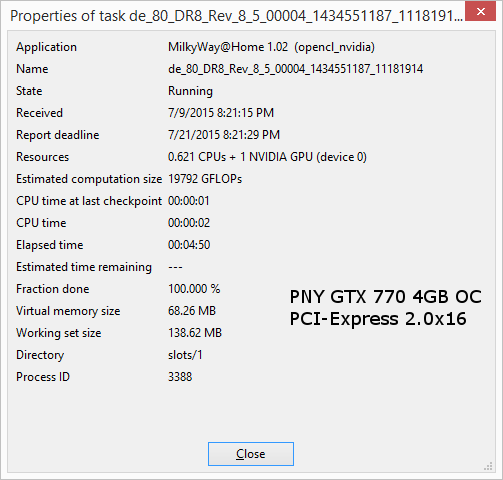

But again, the idea here was testing throughput: would the 1.0 x1 slot constrict communication with the card? For reference, the GTX 770 — which is practically the same processor as the GTX 680 — is topping out at 68 GFLOPS on the Milkyway@Home jobs when plugged into a PCI-Express 2.0×16 slot. Note as well that the GTX 770s have 4GB of memory, whereas the GTX 680 I purchased has only 2GB, the same as the GT 620 and GTX 660s.

So what’re the results?

The GTX 680 actually performs better. On a similar job, the GTX 680 finished about 25 seconds sooner. How can this be?

The only explanation for this is simply the operating system. My FX-8350 system runs Windows 8.1 Pro, which is going to compete with Berkeley for the graphics subsystem. The X2 is being run on a headless Linux server that does not have a graphical windowing system installed, meaning the Berkeley client has full access to the graphics card for processing.

But this shows that for the GTX 680 — meaning also the GTX 770 — the PCI-Express 1.0 x1 lane is not going to be a constriction. I’m now very curious as to how well a GTX 780, or even a GTX 980 would perform on this.

Hell I’d even be willing to test a Titan X on this. Anyone care to lend me one?

Radiator panels

The graphics cards are going to be water cooled in this setup. Eventually. But part of shopping around was trying to figure out how best to do this. I considered a custom enclosure for holding radiators, a pump and reservoir, but decided that would be overkill. And extremely expensive. So then the idea came to mind of just bolting the radiators to a panel with the pump and reservoir either bolted to the radiator or sitting on a shelf behind it. I opted for the latter.

This wasn’t just about saving money but eliminating needless complication. Going with a panel would be simple. An enclosure? Not so much. I’d either have to modify an existing enclosure or have something custom fabricated. Instead I went with a custom cut panel.

Previously I engaged Protocase on having a custom enclosure built. This time, I decided to go across the pond and engage a company in the United Kingdom called All Metal Parts. They have a 3U fan panel for three 120mm fans, so I contacted them about a custom panel for a radiator. Their FAQ page quoted £40 for one panel, £60 for two panels plus shipping.

Specifically I’ll be using the EX360 radiator from XS-PC on this, though I’m not sure yet if I’ll have the fans in push or pull. If I go for push, I’ll probably have the panel sandwiched between the fans and the radiator. All Metal Parts held to the £60 for the panels plus £28 for shipping, which is about $140US total. I was hoping for less since what I originally asked for would’ve been a modification on a panel they already sold.

The design they sent for approval, though, after asking my opinion in advance, has the opening in the panel matching the side of the radiator. In the request, I provided a link to the product page for the radiator, which has a diagram on it, making it easy to design the panel. My only note was to make sure the screw holes were 4mm, large enough to allow a #6-32 screw to pass through.

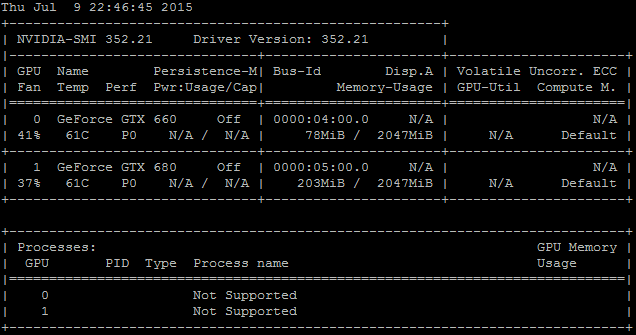

The desire to water cool this setup is merely about cooling. For one the GTX 660 and 680 sit in the lower 60s C while the Berkeley client is running, and I know that a liquid cooling loop will have them sitting significantly lower — probably even in the 30s C.

Silence is actually not a major consideration in this. As the image above shows, the blowers aren’t spinning much, hovering around 40%, which shows that the cards aren’t being taxed all that much. But it also means there’s not a lot of noise to curtail — the setup shown above actually isn’t all that noisy. As such I’m more concerned about the temperatures.